Tree Models¶

Hetero SecureBoost¶

Gradient Boosting Decision Tree(GBDT) is a widely used statistic model for classification and regression problems. FATE provides a novel lossless privacy-preserving tree-boosting system known as SecureBoost: A Lossless Federated Learning Framework.

This federated learning system allows a learning process to be jointly conducted over multiple parties with partially common user samples but different feature sets, which corresponds to a vertically partitioned data set. An advantage of SecureBoost is that it provides the same level of accuracy as the non privacy-preserving approach while revealing no information on private data.

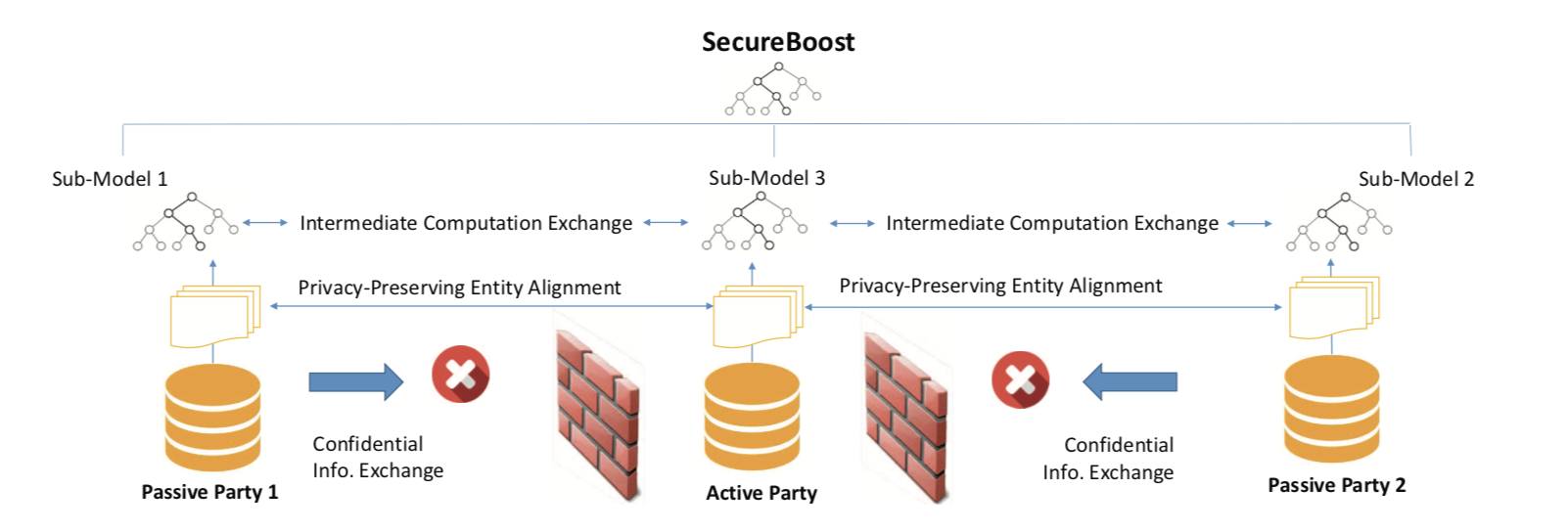

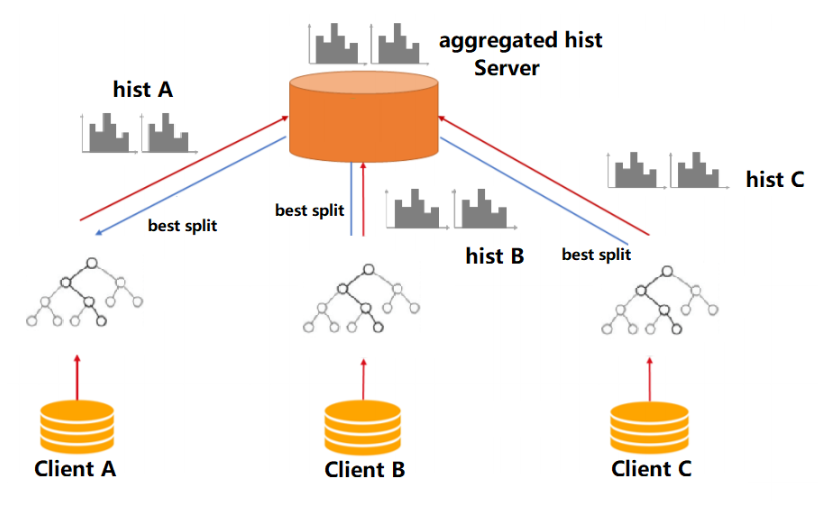

The following figure shows the proposed Federated SecureBoost framework.

-

Active Party

We define the active party as the data provider who holds both a data matrix and the class label. Since the class label information is indispensable for supervised learning, there must be an active party with access to the label y. The active party naturally takes the responsibility as a dominating server in federated learning.

-

Passive Party

We define the data provider which has only a data matrix as a passive party. Passive parties play the role of clients in the federated learning setting. They are also in need of building a model to predict the class label y for their prediction purposes. Thus they must collaborate with the active party to build their model to predict y for their future users using their own features.

We align the data samples under an encryption scheme by using the privacy-preserving protocol for inter-database intersections to find the common shared users or data samples across the parties without compromising the non-shared parts of the user sets.

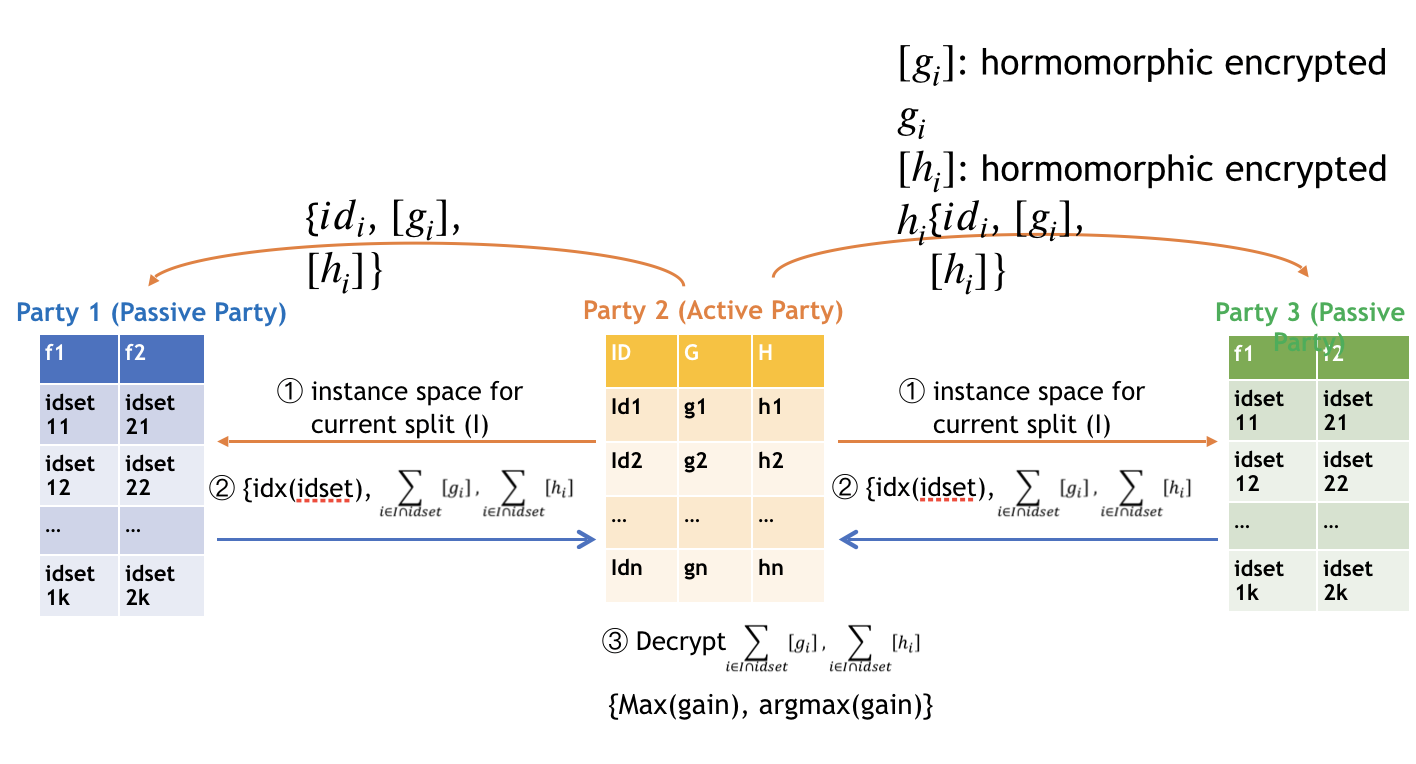

To ensure security, passive parties cannot get access to gradient and hessian directly. We use a "XGBoost" like tree-learning algorithm. In order to keep gradient and hessian confidential, we require that the active party encrypt gradient and hessian before sending them to passive parties. After encrypted the gradient and hessian, active party will send the encrypted [gradient] and [hessian] to passive party. Each passive party uses [gradient] and [hessian] to calculate the encrypted feature histograms, then encodes the (feature, split_bin_val) and constructs a (feature, split_bin_val) lookup table; it then sends the encoded value of (feature, split_bin_val) with feature histograms to the active party. After receiving the feature histograms from passive parties, the active party decrypts them and finds the best gains. If the best-gain feature belongs to a passive party, the active party sends the encoded (feature, split_bin_val) to back to the owner party. The following figure shows the process of finding split in federated tree building.

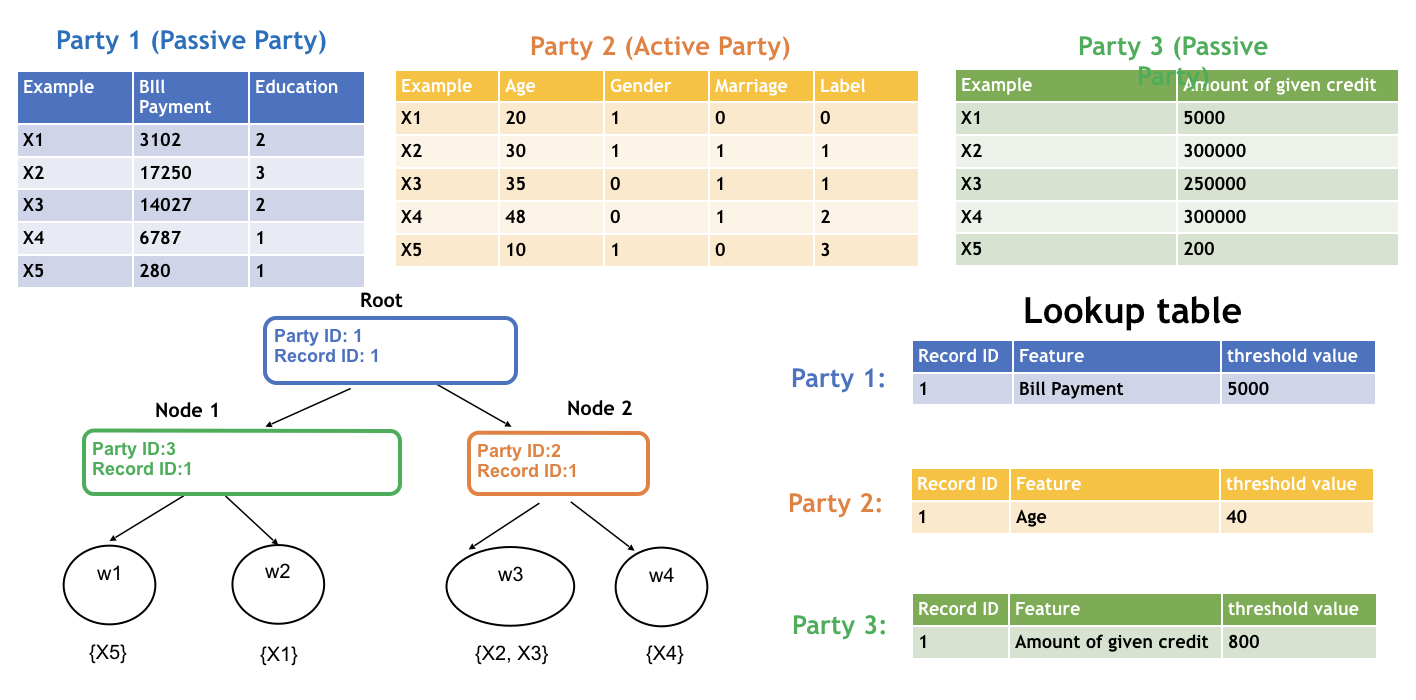

The parties continue the split finding process until tree construction finishes. Each party only knows the detailed split information of the tree nodes where the split features are provided by the party. The following figure shows the final structure of a single decision tree.

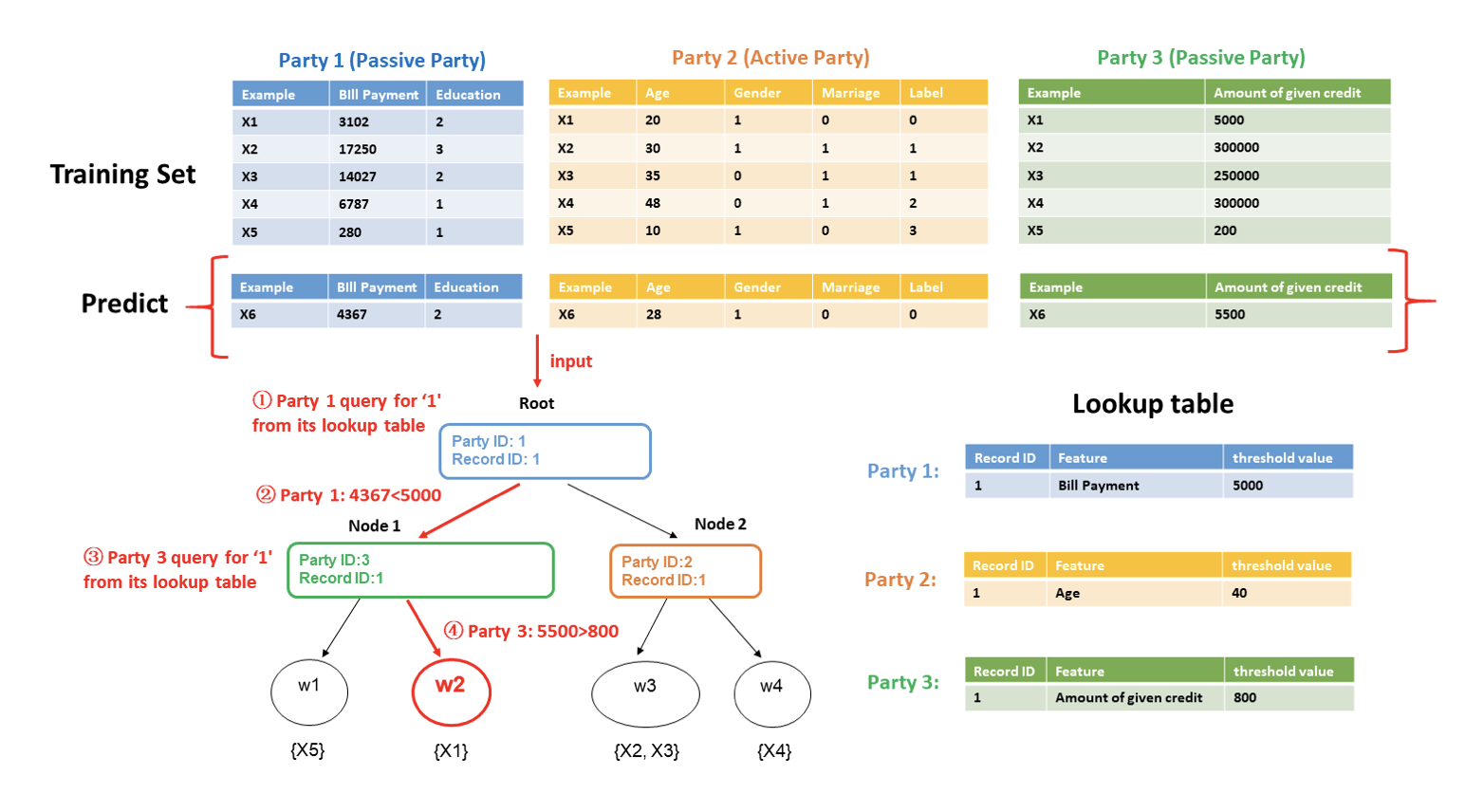

To use the learned model to classify a new instance, the active party first judges where current tree node belongs to. If the current tree belongs to the active party, then it can use its (feature, split_bin_val) lookup table to decide whether going to left child node or right; otherwise, the active party sends the node id to designated passive party, the passive party checks its lookup table and sends back which branch should the current node goes to. This process stops until the current node is a leave. The following figure shows the federated inference process.

By following the SecureBoost framework, multiple parties can jointly build tree ensemble model without leaking privacy in federated learning. If you want to learn more about the algorithm, you can read the paper attached above.

Optimization in Parallel Learning¶

SecureBoost uses data parallel learning algorithm to build the decision trees in every party. The procedure of the data parallel algorithm in each party is:

- Every party use mapPartitions API interface to generate feature-histograms of each partition of data.

- Use reduce API interface to merge global histograms from all local feature-histograms

- Find the best splits from merged global histograms by federated learning, then perform splits.

Applications¶

Hetero SecureBoost supports the following applications.

- binary classification, the objective function is sigmoid cross-entropy

- multi classification, the objective function is softmax cross-entropy

- regression, objective function includes least-squared-error-loss、least-absolutely-error-loss、huber-loss、 tweedie-loss、fair-loss、 log-cosh-loss

Other features¶

- Column sub-sample

- Controlling the number of nodes to split parallelly at each layer by setting max_split_nodes parameter,in order to avoid memory limit exceeding

- Support feature importance calculation

- Support Multi-host and single guest to build model

- Support different encrypt-mode to balance speed and security

- Support missing value in train and predict process

- Support evaluate training and validate data during training process

- Support another homomorphic encryption method called "Iterative

- Support early stopping in FATE-1.4, to use early stopping, see Boosting Tree Param

- Support sparse data optimization in FATE-1.5. You can activate it by setting "sparse_optimization" as true in conf. Notice that this feature may increase memory consumption. See here.

- Support feature subsample random seed setting in FATE-1.5

- Support feature binning error setting

- Support GOSS sampling in FATE-1.6

- Support cipher compressing and g, h packing in FATE-1.7

Homo SecureBoost¶

Unlike Hetero Secureboost, Homo SecureBoost is conducted under a different setting. In homo SecureBoost, every participant(clients) holds data that shares the same feature space, and jointly train a GBDT model without leaking any data sample.

The figure below shows the overall framework of the homo SecureBoost algorithm.

-

Client

Clients are the participants who hold their labeled samples. Samples from all client parties have the same feature space. Clients are to build a more powerful model together without leaking local samples, and they share the same trained model after learning. -

Server

There are potentials of data leakage if all participants send its local histogram(which contains sum of gradient and hessian) to each other because sometimes features and labels can be inferred from gradient sums and hessian sums. Thus, to ensure security in the learning process, the Server uses secure aggregation to aggregate all participants' local histograms in a safe manner. The server can get a global histogram while not getting any local histogram and then find and broadcast the best splits to clients. Server collaborates with all clients in the learning process.

The key steps of learning a Homo SecureBoost model are described below:

- Clients and Server initialize local settings. Clients and Server apply homo feature binning to get binning points for all features and then to pre-process local samples.

-

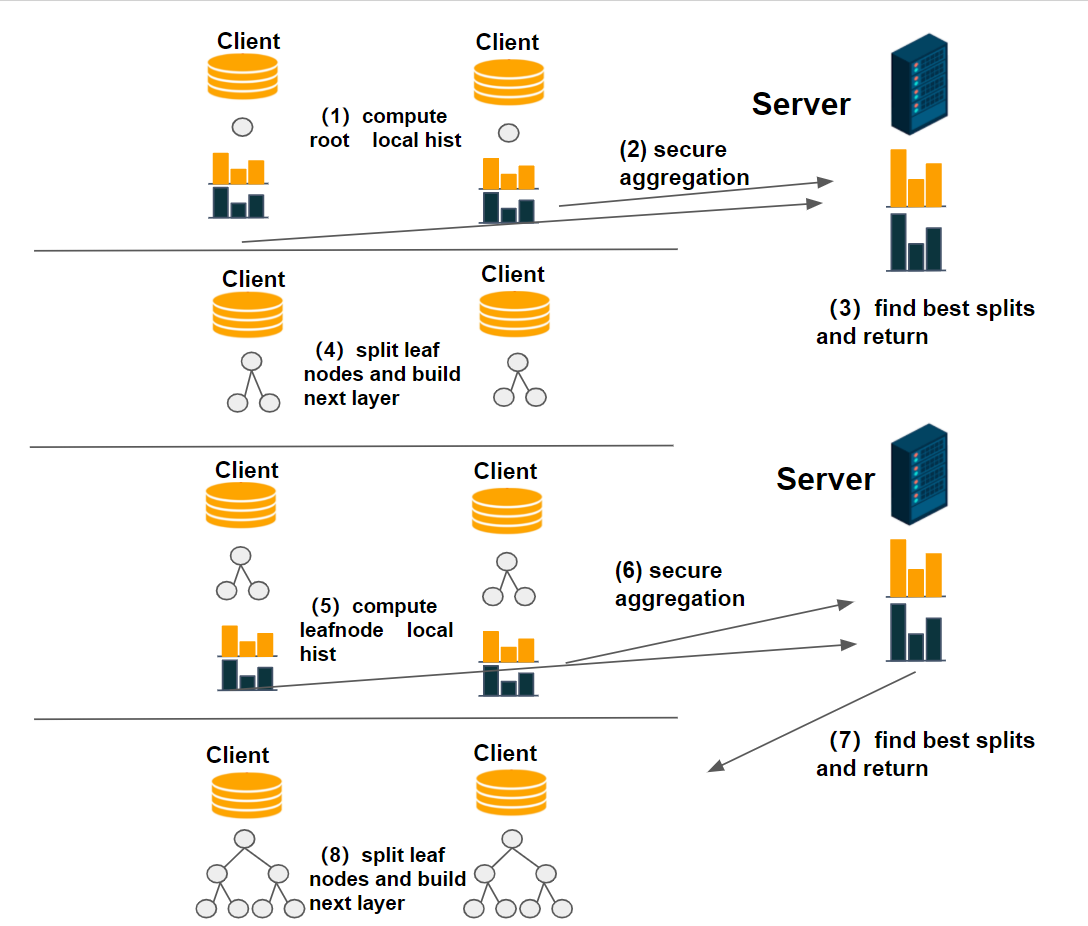

Clients and Server build a decision tree collaboratively:

a. Clients compute local histograms for cur leaf nodes (left nodes or root node)

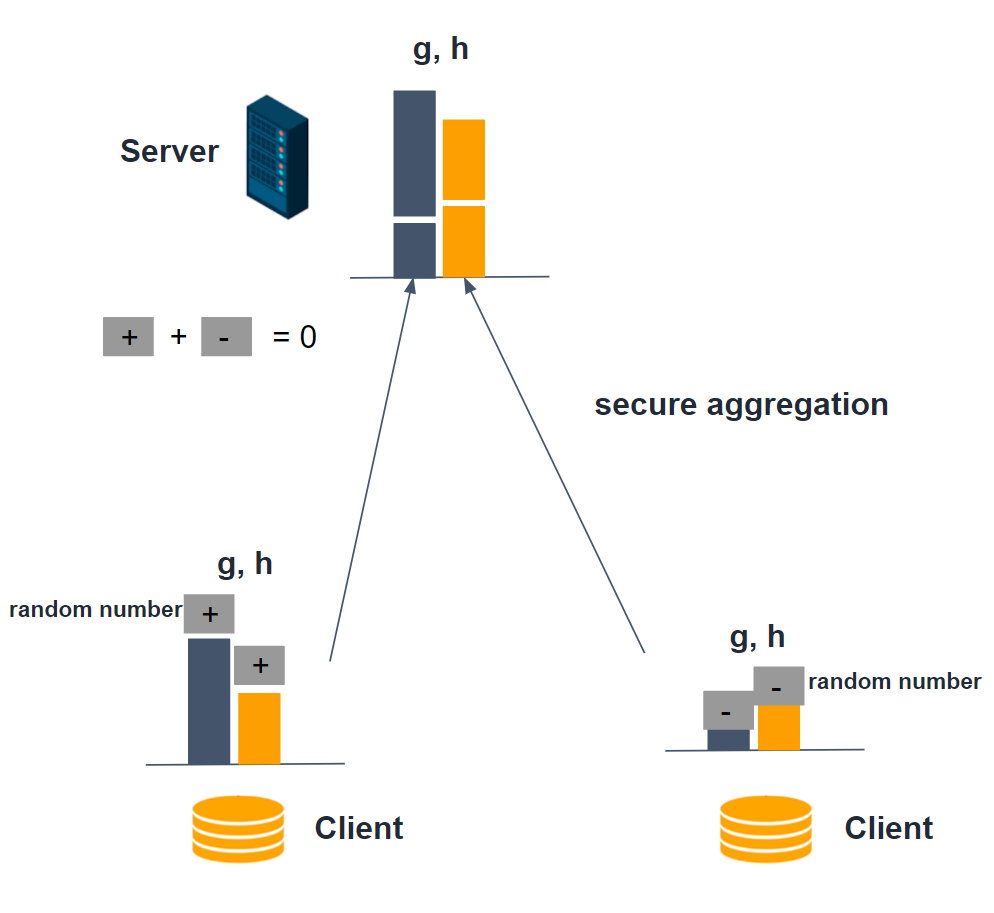

b. The server applies secure aggregations: every local histogram plus a random number, and these numbers can cancel each other out. By this way server can get the global histogram without knowing any local histograms and data leakage is prevented. Figure below shows how histogram secure aggregations are conducted.

c. The server commit histogram subtractions: getting the right node histograms by subtracting left node local histogram from parent node histogram. Then, the server will find the best splits points and broadcast them to clients.

d. After getting the best split points, clients build the next layer for the current decision tree and re-assign samples. If current decision tree reaches the max depth or stop conditions are fulfilled, stop build the current tree, else go back to step (1). Figure below shows the procedure of fitting a decision tree.

-

If tree number reaches the max number, or loss is converged, Homo SecureBoost Fitting process stops.

By following the steps above clients are able to jointly build a GBDT model. Every client can then conduct inference on a new instance locally.

Optimization in learning¶

Homo SecureBoost utilizes data parallelization and histogram subtraction to accelerate the learning process.

- Every party use mapPartitions and reduce API interface to generate local feature-histograms, only samples in left nodes are used in computing feature histograms.

- The server aggregates all local histograms to get global histograms then get sibling histograms by subtracting left node histograms from parent histograms.

- The server finds the best splits from merged global histograms, then broadcast best splits.

- The computational cost and transmission cost are halved by using node subtraction.

Applications¶

Homo SecureBoost supports the following applications:

- binary classification, the objective function is sigmoid cross-entropy

- multi classification, the objective function is softmax cross-entropy

- regression, objective function includes least-squared-error-loss, least-absolutely-error-loss, huber-loss, tweedie-loss, fair-loss, log-cosh-loss

Other features¶

- Server uses safe aggregations to aggregate clients' histograms and losses, ensuring data security

- Column sub-sample

- Controlling the number of nodes to split parallelly at each layer by setting max_split_nodes parameter,in order to avoid memory limit exceeding

- Support feature importance calculation

- Support Multi-host and single guest to build model

- Support missing value in train and predict process

- Support evaluate training and validate data during training process

- Support feature subsample random seed setting in FATE-1.5

- Support feature binning error setting.

Param¶

boosting_param

¶

hetero_deprecated_param_list

¶

homo_deprecated_param_list

¶

Classes¶

ObjectiveParam (BaseParam)

¶

Define objective parameters that used in federated ml.

Parameters¶

objective : {None, 'cross_entropy', 'lse', 'lae', 'log_cosh', 'tweedie', 'fair', 'huber'} None in host's config, should be str in guest'config. when task_type is classification, only support 'cross_entropy', other 6 types support in regression task

params : None or list should be non empty list when objective is 'tweedie','fair','huber', first element of list shoulf be a float-number large than 0.0 when objective is 'fair', 'huber', first element of list should be a float-number in [1.0, 2.0) when objective is 'tweedie'

Source code in federatedml/param/boosting_param.py

class ObjectiveParam(BaseParam):

"""

Define objective parameters that used in federated ml.

Parameters

----------

objective : {None, 'cross_entropy', 'lse', 'lae', 'log_cosh', 'tweedie', 'fair', 'huber'}

None in host's config, should be str in guest'config.

when task_type is classification, only support 'cross_entropy',

other 6 types support in regression task

params : None or list

should be non empty list when objective is 'tweedie','fair','huber',

first element of list shoulf be a float-number large than 0.0 when objective is 'fair', 'huber',

first element of list should be a float-number in [1.0, 2.0) when objective is 'tweedie'

"""

def __init__(self, objective='cross_entropy', params=None):

self.objective = objective

self.params = params

def check(self, task_type=None):

if self.objective is None:

return True

descr = "objective param's"

LOGGER.debug('check objective {}'.format(self.objective))

if task_type not in [consts.CLASSIFICATION, consts.REGRESSION]:

self.objective = self.check_and_change_lower(self.objective,

["cross_entropy", "lse", "lae", "huber", "fair",

"log_cosh", "tweedie"],

descr)

if task_type == consts.CLASSIFICATION:

if self.objective != "cross_entropy":

raise ValueError("objective param's objective {} not supported".format(self.objective))

elif task_type == consts.REGRESSION:

self.objective = self.check_and_change_lower(self.objective,

["lse", "lae", "huber", "fair", "log_cosh", "tweedie"],

descr)

params = self.params

if self.objective in ["huber", "fair", "tweedie"]:

if type(params).__name__ != 'list' or len(params) < 1:

raise ValueError(

"objective param's params {} not supported, should be non-empty list".format(params))

if type(params[0]).__name__ not in ["float", "int", "long"]:

raise ValueError("objective param's params[0] {} not supported".format(self.params[0]))

if self.objective == 'tweedie':

if params[0] < 1 or params[0] >= 2:

raise ValueError("in tweedie regression, objective params[0] should betweend [1, 2)")

if self.objective == 'fair' or 'huber':

if params[0] <= 0.0:

raise ValueError("in {} regression, objective params[0] should greater than 0.0".format(

self.objective))

return True

__init__(self, objective='cross_entropy', params=None)

special

¶Source code in federatedml/param/boosting_param.py

def __init__(self, objective='cross_entropy', params=None):

self.objective = objective

self.params = params

check(self, task_type=None)

¶Source code in federatedml/param/boosting_param.py

def check(self, task_type=None):

if self.objective is None:

return True

descr = "objective param's"

LOGGER.debug('check objective {}'.format(self.objective))

if task_type not in [consts.CLASSIFICATION, consts.REGRESSION]:

self.objective = self.check_and_change_lower(self.objective,

["cross_entropy", "lse", "lae", "huber", "fair",

"log_cosh", "tweedie"],

descr)

if task_type == consts.CLASSIFICATION:

if self.objective != "cross_entropy":

raise ValueError("objective param's objective {} not supported".format(self.objective))

elif task_type == consts.REGRESSION:

self.objective = self.check_and_change_lower(self.objective,

["lse", "lae", "huber", "fair", "log_cosh", "tweedie"],

descr)

params = self.params

if self.objective in ["huber", "fair", "tweedie"]:

if type(params).__name__ != 'list' or len(params) < 1:

raise ValueError(

"objective param's params {} not supported, should be non-empty list".format(params))

if type(params[0]).__name__ not in ["float", "int", "long"]:

raise ValueError("objective param's params[0] {} not supported".format(self.params[0]))

if self.objective == 'tweedie':

if params[0] < 1 or params[0] >= 2:

raise ValueError("in tweedie regression, objective params[0] should betweend [1, 2)")

if self.objective == 'fair' or 'huber':

if params[0] <= 0.0:

raise ValueError("in {} regression, objective params[0] should greater than 0.0".format(

self.objective))

return True

DecisionTreeParam (BaseParam)

¶

Define decision tree parameters that used in federated ml.

Parameters¶

criterion_method : {"xgboost"}, default: "xgboost" the criterion function to use

list or dict

should be non empty and elements are float-numbers, if a list is offered, the first one is l2 regularization value, and the second one is l1 regularization value. if a dict is offered, make sure it contains key 'l1', and 'l2'. l1, l2 regularization values are non-negative floats. default: [0.1, 0] or {'l1':0, 'l2':0,1}

positive integer

the max depth of a decision tree, default: 3

int

least quantity of nodes to split, default: 2

float

least gain of a single split need to reach, default: 1e-3

float

sum of hessian needed in child nodes. default is 0

int

when samples no more than min_leaf_node, it becomes a leave, default: 1

positive integer

we will use no more than max_split_nodes to parallel finding their splits in a batch, for memory consideration. default is 65536

{'split', 'gain'}

if is 'split', feature_importances calculate by feature split times, if is 'gain', feature_importances calculate by feature split gain. default: 'split'

Due to the safety concern, we adjust training strategy of Hetero-SBT in FATE-1.8,

When running Hetero-SBT, this parameter is now abandoned.

In Hetero-SBT of FATE-1.8, guest side will compute split, gain of local features,

and receive anonymous feature importance results from hosts. Hosts will compute split

importance of local features.

bool, accepted True, False only, default: False

use missing value in training process or not.

bool

regard 0 as missing value or not, will be use only if use_missing=True, default: False

bool

ensure stability when computing histogram. Set this to true to ensure stable result when using same data and same parameter. But it may slow down computation.

Source code in federatedml/param/boosting_param.py

class DecisionTreeParam(BaseParam):

"""

Define decision tree parameters that used in federated ml.

Parameters

----------

criterion_method : {"xgboost"}, default: "xgboost"

the criterion function to use

criterion_params: list or dict

should be non empty and elements are float-numbers,

if a list is offered, the first one is l2 regularization value, and the second one is

l1 regularization value.

if a dict is offered, make sure it contains key 'l1', and 'l2'.

l1, l2 regularization values are non-negative floats.

default: [0.1, 0] or {'l1':0, 'l2':0,1}

max_depth: positive integer

the max depth of a decision tree, default: 3

min_sample_split: int

least quantity of nodes to split, default: 2

min_impurity_split: float

least gain of a single split need to reach, default: 1e-3

min_child_weight: float

sum of hessian needed in child nodes. default is 0

min_leaf_node: int

when samples no more than min_leaf_node, it becomes a leave, default: 1

max_split_nodes: positive integer

we will use no more than max_split_nodes to

parallel finding their splits in a batch, for memory consideration. default is 65536

feature_importance_type: {'split', 'gain'}

if is 'split', feature_importances calculate by feature split times,

if is 'gain', feature_importances calculate by feature split gain.

default: 'split'

Due to the safety concern, we adjust training strategy of Hetero-SBT in FATE-1.8,

When running Hetero-SBT, this parameter is now abandoned.

In Hetero-SBT of FATE-1.8, guest side will compute split, gain of local features,

and receive anonymous feature importance results from hosts. Hosts will compute split

importance of local features.

use_missing: bool, accepted True, False only, default: False

use missing value in training process or not.

zero_as_missing: bool

regard 0 as missing value or not,

will be use only if use_missing=True, default: False

deterministic: bool

ensure stability when computing histogram. Set this to true to ensure stable result when using

same data and same parameter. But it may slow down computation.

"""

def __init__(self, criterion_method="xgboost", criterion_params=[0.1, 0], max_depth=3,

min_sample_split=2, min_impurity_split=1e-3, min_leaf_node=1,

max_split_nodes=consts.MAX_SPLIT_NODES, feature_importance_type='split',

n_iter_no_change=True, tol=0.001, min_child_weight=0,

use_missing=False, zero_as_missing=False, deterministic=False):

super(DecisionTreeParam, self).__init__()

self.criterion_method = criterion_method

self.criterion_params = criterion_params

self.max_depth = max_depth

self.min_sample_split = min_sample_split

self.min_impurity_split = min_impurity_split

self.min_leaf_node = min_leaf_node

self.min_child_weight = min_child_weight

self.max_split_nodes = max_split_nodes

self.feature_importance_type = feature_importance_type

self.n_iter_no_change = n_iter_no_change

self.tol = tol

self.use_missing = use_missing

self.zero_as_missing = zero_as_missing

self.deterministic = deterministic

def check(self):

descr = "decision tree param"

self.criterion_method = self.check_and_change_lower(self.criterion_method,

["xgboost"],

descr)

if len(self.criterion_params) == 0:

raise ValueError("decisition tree param's criterio_params should be non empty")

if isinstance(self.criterion_params, list):

assert len(self.criterion_params) == 2, 'length of criterion_param should be 2: l1, l2 regularization ' \

'values are needed'

self.check_nonnegative_number(self.criterion_params[0], 'l2 reg value')

self.check_nonnegative_number(self.criterion_params[1], 'l1 reg value')

elif isinstance(self.criterion_params, dict):

assert 'l1' in self.criterion_params and 'l2' in self.criterion_params, 'l1 and l2 keys are needed in ' \

'criterion_params dict'

self.criterion_params = [self.criterion_params['l2'], self.criterion_params['l1']]

else:

raise ValueError('criterion_params should be a dict or a list contains l1, l2 reg value')

if type(self.max_depth).__name__ not in ["int", "long"]:

raise ValueError("decision tree param's max_depth {} not supported, should be integer".format(

self.max_depth))

if self.max_depth < 1:

raise ValueError("decision tree param's max_depth should be positive integer, no less than 1")

if type(self.min_sample_split).__name__ not in ["int", "long"]:

raise ValueError("decision tree param's min_sample_split {} not supported, should be integer".format(

self.min_sample_split))

if type(self.min_impurity_split).__name__ not in ["int", "long", "float"]:

raise ValueError("decision tree param's min_impurity_split {} not supported, should be numeric".format(

self.min_impurity_split))

if type(self.min_leaf_node).__name__ not in ["int", "long"]:

raise ValueError("decision tree param's min_leaf_node {} not supported, should be integer".format(

self.min_leaf_node))

if type(self.max_split_nodes).__name__ not in ["int", "long"] or self.max_split_nodes < 1:

raise ValueError("decision tree param's max_split_nodes {} not supported, " +

"should be positive integer between 1 and {}".format(self.max_split_nodes,

consts.MAX_SPLIT_NODES))

if type(self.n_iter_no_change).__name__ != "bool":

raise ValueError("decision tree param's n_iter_no_change {} not supported, should be bool type".format(

self.n_iter_no_change))

if type(self.tol).__name__ not in ["float", "int", "long"]:

raise ValueError("decision tree param's tol {} not supported, should be numeric".format(self.tol))

self.feature_importance_type = self.check_and_change_lower(self.feature_importance_type,

["split", "gain"],

descr)

self.check_nonnegative_number(self.min_child_weight, 'min_child_weight')

self.check_boolean(self.deterministic, 'deterministic')

return True

__init__(self, criterion_method='xgboost', criterion_params=[0.1, 0], max_depth=3, min_sample_split=2, min_impurity_split=0.001, min_leaf_node=1, max_split_nodes=65536, feature_importance_type='split', n_iter_no_change=True, tol=0.001, min_child_weight=0, use_missing=False, zero_as_missing=False, deterministic=False)

special

¶Source code in federatedml/param/boosting_param.py

def __init__(self, criterion_method="xgboost", criterion_params=[0.1, 0], max_depth=3,

min_sample_split=2, min_impurity_split=1e-3, min_leaf_node=1,

max_split_nodes=consts.MAX_SPLIT_NODES, feature_importance_type='split',

n_iter_no_change=True, tol=0.001, min_child_weight=0,

use_missing=False, zero_as_missing=False, deterministic=False):

super(DecisionTreeParam, self).__init__()

self.criterion_method = criterion_method

self.criterion_params = criterion_params

self.max_depth = max_depth

self.min_sample_split = min_sample_split

self.min_impurity_split = min_impurity_split

self.min_leaf_node = min_leaf_node

self.min_child_weight = min_child_weight

self.max_split_nodes = max_split_nodes

self.feature_importance_type = feature_importance_type

self.n_iter_no_change = n_iter_no_change

self.tol = tol

self.use_missing = use_missing

self.zero_as_missing = zero_as_missing

self.deterministic = deterministic

check(self)

¶Source code in federatedml/param/boosting_param.py

def check(self):

descr = "decision tree param"

self.criterion_method = self.check_and_change_lower(self.criterion_method,

["xgboost"],

descr)

if len(self.criterion_params) == 0:

raise ValueError("decisition tree param's criterio_params should be non empty")

if isinstance(self.criterion_params, list):

assert len(self.criterion_params) == 2, 'length of criterion_param should be 2: l1, l2 regularization ' \

'values are needed'

self.check_nonnegative_number(self.criterion_params[0], 'l2 reg value')

self.check_nonnegative_number(self.criterion_params[1], 'l1 reg value')

elif isinstance(self.criterion_params, dict):

assert 'l1' in self.criterion_params and 'l2' in self.criterion_params, 'l1 and l2 keys are needed in ' \

'criterion_params dict'

self.criterion_params = [self.criterion_params['l2'], self.criterion_params['l1']]

else:

raise ValueError('criterion_params should be a dict or a list contains l1, l2 reg value')

if type(self.max_depth).__name__ not in ["int", "long"]:

raise ValueError("decision tree param's max_depth {} not supported, should be integer".format(

self.max_depth))

if self.max_depth < 1:

raise ValueError("decision tree param's max_depth should be positive integer, no less than 1")

if type(self.min_sample_split).__name__ not in ["int", "long"]:

raise ValueError("decision tree param's min_sample_split {} not supported, should be integer".format(

self.min_sample_split))

if type(self.min_impurity_split).__name__ not in ["int", "long", "float"]:

raise ValueError("decision tree param's min_impurity_split {} not supported, should be numeric".format(

self.min_impurity_split))

if type(self.min_leaf_node).__name__ not in ["int", "long"]:

raise ValueError("decision tree param's min_leaf_node {} not supported, should be integer".format(

self.min_leaf_node))

if type(self.max_split_nodes).__name__ not in ["int", "long"] or self.max_split_nodes < 1:

raise ValueError("decision tree param's max_split_nodes {} not supported, " +

"should be positive integer between 1 and {}".format(self.max_split_nodes,

consts.MAX_SPLIT_NODES))

if type(self.n_iter_no_change).__name__ != "bool":

raise ValueError("decision tree param's n_iter_no_change {} not supported, should be bool type".format(

self.n_iter_no_change))

if type(self.tol).__name__ not in ["float", "int", "long"]:

raise ValueError("decision tree param's tol {} not supported, should be numeric".format(self.tol))

self.feature_importance_type = self.check_and_change_lower(self.feature_importance_type,

["split", "gain"],

descr)

self.check_nonnegative_number(self.min_child_weight, 'min_child_weight')

self.check_boolean(self.deterministic, 'deterministic')

return True

BoostingParam (BaseParam)

¶

Basic parameter for Boosting Algorithms

Parameters¶

task_type : {'classification', 'regression'}, default: 'classification' task type

objective_param : ObjectiveParam Object, default: ObjectiveParam() objective param

learning_rate : float, int or long the learning rate of secure boost. default: 0.3

num_trees : int or float the max number of boosting round. default: 5

subsample_feature_rate : float a float-number in [0, 1], default: 1.0

n_iter_no_change : bool, when True and residual error less than tol, tree building process will stop. default: True

positive integer greater than 1

bin number use in quantile. default: 32

None or positive integer or container object in python

Do validation in training process or Not. if equals None, will not do validation in train process; if equals positive integer, will validate data every validation_freqs epochs passes; if container object in python, will validate data if epochs belong to this container. e.g. validation_freqs = [10, 15], will validate data when epoch equals to 10 and 15. Default: None

Source code in federatedml/param/boosting_param.py

class BoostingParam(BaseParam):

"""

Basic parameter for Boosting Algorithms

Parameters

----------

task_type : {'classification', 'regression'}, default: 'classification'

task type

objective_param : ObjectiveParam Object, default: ObjectiveParam()

objective param

learning_rate : float, int or long

the learning rate of secure boost. default: 0.3

num_trees : int or float

the max number of boosting round. default: 5

subsample_feature_rate : float

a float-number in [0, 1], default: 1.0

n_iter_no_change : bool,

when True and residual error less than tol, tree building process will stop. default: True

bin_num: positive integer greater than 1

bin number use in quantile. default: 32

validation_freqs: None or positive integer or container object in python

Do validation in training process or Not.

if equals None, will not do validation in train process;

if equals positive integer, will validate data every validation_freqs epochs passes;

if container object in python, will validate data if epochs belong to this container.

e.g. validation_freqs = [10, 15], will validate data when epoch equals to 10 and 15.

Default: None

"""

def __init__(self, task_type=consts.CLASSIFICATION,

objective_param=ObjectiveParam(),

learning_rate=0.3, num_trees=5, subsample_feature_rate=1, n_iter_no_change=True,

tol=0.0001, bin_num=32,

predict_param=PredictParam(), cv_param=CrossValidationParam(),

validation_freqs=None, metrics=None, random_seed=100,

binning_error=consts.DEFAULT_RELATIVE_ERROR):

super(BoostingParam, self).__init__()

self.task_type = task_type

self.objective_param = copy.deepcopy(objective_param)

self.learning_rate = learning_rate

self.num_trees = num_trees

self.subsample_feature_rate = subsample_feature_rate

self.n_iter_no_change = n_iter_no_change

self.tol = tol

self.bin_num = bin_num

self.predict_param = copy.deepcopy(predict_param)

self.cv_param = copy.deepcopy(cv_param)

self.validation_freqs = validation_freqs

self.metrics = metrics

self.random_seed = random_seed

self.binning_error = binning_error

def check(self):

descr = "boosting tree param's"

if self.task_type not in [consts.CLASSIFICATION, consts.REGRESSION]:

raise ValueError("boosting_core tree param's task_type {} not supported, should be {} or {}".format(

self.task_type, consts.CLASSIFICATION, consts.REGRESSION))

self.objective_param.check(self.task_type)

if type(self.learning_rate).__name__ not in ["float", "int", "long"]:

raise ValueError("boosting_core tree param's learning_rate {} not supported, should be numeric".format(

self.learning_rate))

if type(self.subsample_feature_rate).__name__ not in ["float", "int", "long"] or \

self.subsample_feature_rate < 0 or self.subsample_feature_rate > 1:

raise ValueError(

"boosting_core tree param's subsample_feature_rate should be a numeric number between 0 and 1")

if type(self.n_iter_no_change).__name__ != "bool":

raise ValueError("boosting_core tree param's n_iter_no_change {} not supported, should be bool type".format(

self.n_iter_no_change))

if type(self.tol).__name__ not in ["float", "int", "long"]:

raise ValueError("boosting_core tree param's tol {} not supported, should be numeric".format(self.tol))

if type(self.bin_num).__name__ not in ["int", "long"] or self.bin_num < 2:

raise ValueError(

"boosting_core tree param's bin_num {} not supported, should be positive integer greater than 1".format(

self.bin_num))

if self.validation_freqs is None:

pass

elif isinstance(self.validation_freqs, int):

if self.validation_freqs < 1:

raise ValueError("validation_freqs should be larger than 0 when it's integer")

elif not isinstance(self.validation_freqs, collections.Container):

raise ValueError("validation_freqs should be None or positive integer or container")

if self.metrics is not None and not isinstance(self.metrics, list):

raise ValueError("metrics should be a list")

if self.random_seed is not None:

assert isinstance(self.random_seed, int) and self.random_seed >= 0, 'random seed must be an integer >= 0'

self.check_decimal_float(self.binning_error, descr)

return True

__init__(self, task_type='classification', objective_param=<federatedml.param.boosting_param.ObjectiveParam object at 0x7f93205ac690>, learning_rate=0.3, num_trees=5, subsample_feature_rate=1, n_iter_no_change=True, tol=0.0001, bin_num=32, predict_param=<federatedml.param.predict_param.PredictParam object at 0x7f9323de7a50>, cv_param=<federatedml.param.cross_validation_param.CrossValidationParam object at 0x7f93205ac810>, validation_freqs=None, metrics=None, random_seed=100, binning_error=0.0001)

special

¶Source code in federatedml/param/boosting_param.py

def __init__(self, task_type=consts.CLASSIFICATION,

objective_param=ObjectiveParam(),

learning_rate=0.3, num_trees=5, subsample_feature_rate=1, n_iter_no_change=True,

tol=0.0001, bin_num=32,

predict_param=PredictParam(), cv_param=CrossValidationParam(),

validation_freqs=None, metrics=None, random_seed=100,

binning_error=consts.DEFAULT_RELATIVE_ERROR):

super(BoostingParam, self).__init__()

self.task_type = task_type

self.objective_param = copy.deepcopy(objective_param)

self.learning_rate = learning_rate

self.num_trees = num_trees

self.subsample_feature_rate = subsample_feature_rate

self.n_iter_no_change = n_iter_no_change

self.tol = tol

self.bin_num = bin_num

self.predict_param = copy.deepcopy(predict_param)

self.cv_param = copy.deepcopy(cv_param)

self.validation_freqs = validation_freqs

self.metrics = metrics

self.random_seed = random_seed

self.binning_error = binning_error

check(self)

¶Source code in federatedml/param/boosting_param.py

def check(self):

descr = "boosting tree param's"

if self.task_type not in [consts.CLASSIFICATION, consts.REGRESSION]:

raise ValueError("boosting_core tree param's task_type {} not supported, should be {} or {}".format(

self.task_type, consts.CLASSIFICATION, consts.REGRESSION))

self.objective_param.check(self.task_type)

if type(self.learning_rate).__name__ not in ["float", "int", "long"]:

raise ValueError("boosting_core tree param's learning_rate {} not supported, should be numeric".format(

self.learning_rate))

if type(self.subsample_feature_rate).__name__ not in ["float", "int", "long"] or \

self.subsample_feature_rate < 0 or self.subsample_feature_rate > 1:

raise ValueError(

"boosting_core tree param's subsample_feature_rate should be a numeric number between 0 and 1")

if type(self.n_iter_no_change).__name__ != "bool":

raise ValueError("boosting_core tree param's n_iter_no_change {} not supported, should be bool type".format(

self.n_iter_no_change))

if type(self.tol).__name__ not in ["float", "int", "long"]:

raise ValueError("boosting_core tree param's tol {} not supported, should be numeric".format(self.tol))

if type(self.bin_num).__name__ not in ["int", "long"] or self.bin_num < 2:

raise ValueError(

"boosting_core tree param's bin_num {} not supported, should be positive integer greater than 1".format(

self.bin_num))

if self.validation_freqs is None:

pass

elif isinstance(self.validation_freqs, int):

if self.validation_freqs < 1:

raise ValueError("validation_freqs should be larger than 0 when it's integer")

elif not isinstance(self.validation_freqs, collections.Container):

raise ValueError("validation_freqs should be None or positive integer or container")

if self.metrics is not None and not isinstance(self.metrics, list):

raise ValueError("metrics should be a list")

if self.random_seed is not None:

assert isinstance(self.random_seed, int) and self.random_seed >= 0, 'random seed must be an integer >= 0'

self.check_decimal_float(self.binning_error, descr)

return True

HeteroBoostingParam (BoostingParam)

¶

Parameters¶

encrypt_param : EncodeParam Object encrypt method use in secure boost, default: EncryptParam()

EncryptedModeCalculatorParam object

the calculation mode use in secureboost, default: EncryptedModeCalculatorParam()

Source code in federatedml/param/boosting_param.py

class HeteroBoostingParam(BoostingParam):

"""

Parameters

----------

encrypt_param : EncodeParam Object

encrypt method use in secure boost, default: EncryptParam()

encrypted_mode_calculator_param: EncryptedModeCalculatorParam object

the calculation mode use in secureboost,

default: EncryptedModeCalculatorParam()

"""

def __init__(self, task_type=consts.CLASSIFICATION,

objective_param=ObjectiveParam(),

learning_rate=0.3, num_trees=5, subsample_feature_rate=1, n_iter_no_change=True,

tol=0.0001, encrypt_param=EncryptParam(),

bin_num=32,

encrypted_mode_calculator_param=EncryptedModeCalculatorParam(),

predict_param=PredictParam(), cv_param=CrossValidationParam(),

validation_freqs=None, early_stopping_rounds=None, metrics=None, use_first_metric_only=False,

random_seed=100, binning_error=consts.DEFAULT_RELATIVE_ERROR):

super(HeteroBoostingParam, self).__init__(task_type, objective_param, learning_rate, num_trees,

subsample_feature_rate, n_iter_no_change, tol, bin_num,

predict_param, cv_param, validation_freqs, metrics=metrics,

random_seed=random_seed,

binning_error=binning_error)

self.encrypt_param = copy.deepcopy(encrypt_param)

self.encrypted_mode_calculator_param = copy.deepcopy(encrypted_mode_calculator_param)

self.early_stopping_rounds = early_stopping_rounds

self.use_first_metric_only = use_first_metric_only

def check(self):

super(HeteroBoostingParam, self).check()

self.encrypted_mode_calculator_param.check()

self.encrypt_param.check()

if self.early_stopping_rounds is None:

pass

elif isinstance(self.early_stopping_rounds, int):

if self.early_stopping_rounds < 1:

raise ValueError("early stopping rounds should be larger than 0 when it's integer")

if self.validation_freqs is None:

raise ValueError("validation freqs must be set when early stopping is enabled")

if not isinstance(self.use_first_metric_only, bool):

raise ValueError("use_first_metric_only should be a boolean")

return True

__init__(self, task_type='classification', objective_param=<federatedml.param.boosting_param.ObjectiveParam object at 0x7f93205ac8d0>, learning_rate=0.3, num_trees=5, subsample_feature_rate=1, n_iter_no_change=True, tol=0.0001, encrypt_param=<federatedml.param.encrypt_param.EncryptParam object at 0x7f93205ac910>, bin_num=32, encrypted_mode_calculator_param=<federatedml.param.encrypted_mode_calculation_param.EncryptedModeCalculatorParam object at 0x7f93205ac9d0>, predict_param=<federatedml.param.predict_param.PredictParam object at 0x7f93205ac850>, cv_param=<federatedml.param.cross_validation_param.CrossValidationParam object at 0x7f93205ac950>, validation_freqs=None, early_stopping_rounds=None, metrics=None, use_first_metric_only=False, random_seed=100, binning_error=0.0001)

special

¶Source code in federatedml/param/boosting_param.py

def __init__(self, task_type=consts.CLASSIFICATION,

objective_param=ObjectiveParam(),

learning_rate=0.3, num_trees=5, subsample_feature_rate=1, n_iter_no_change=True,

tol=0.0001, encrypt_param=EncryptParam(),

bin_num=32,

encrypted_mode_calculator_param=EncryptedModeCalculatorParam(),

predict_param=PredictParam(), cv_param=CrossValidationParam(),

validation_freqs=None, early_stopping_rounds=None, metrics=None, use_first_metric_only=False,

random_seed=100, binning_error=consts.DEFAULT_RELATIVE_ERROR):

super(HeteroBoostingParam, self).__init__(task_type, objective_param, learning_rate, num_trees,

subsample_feature_rate, n_iter_no_change, tol, bin_num,

predict_param, cv_param, validation_freqs, metrics=metrics,

random_seed=random_seed,

binning_error=binning_error)

self.encrypt_param = copy.deepcopy(encrypt_param)

self.encrypted_mode_calculator_param = copy.deepcopy(encrypted_mode_calculator_param)

self.early_stopping_rounds = early_stopping_rounds

self.use_first_metric_only = use_first_metric_only

check(self)

¶Source code in federatedml/param/boosting_param.py

def check(self):

super(HeteroBoostingParam, self).check()

self.encrypted_mode_calculator_param.check()

self.encrypt_param.check()

if self.early_stopping_rounds is None:

pass

elif isinstance(self.early_stopping_rounds, int):

if self.early_stopping_rounds < 1:

raise ValueError("early stopping rounds should be larger than 0 when it's integer")

if self.validation_freqs is None:

raise ValueError("validation freqs must be set when early stopping is enabled")

if not isinstance(self.use_first_metric_only, bool):

raise ValueError("use_first_metric_only should be a boolean")

return True

HeteroSecureBoostParam (HeteroBoostingParam)

¶

Define boosting tree parameters that used in federated ml.

Parameters¶

task_type : {'classification', 'regression'}, default: 'classification' task type

tree_param : DecisionTreeParam Object, default: DecisionTreeParam() tree param

objective_param : ObjectiveParam Object, default: ObjectiveParam() objective param

learning_rate : float, int or long the learning rate of secure boost. default: 0.3

num_trees : int or float the max number of trees to build. default: 5

subsample_feature_rate : float a float-number in [0, 1], default: 1.0

int

seed that controls all random functions

n_iter_no_change : bool, when True and residual error less than tol, tree building process will stop. default: True

encrypt_param : EncodeParam Object encrypt method use in secure boost, default: EncryptParam(), this parameter is only for hetero-secureboost

positive integer greater than 1

bin number use in quantile. default: 32

EncryptedModeCalculatorParam object

the calculation mode use in secureboost, default: EncryptedModeCalculatorParam(), only for hetero-secureboost

bool

use missing value in training process or not. default: False

bool

regard 0 as missing value or not, will be use only if use_missing=True, default: False

None or positive integer or container object in python

Do validation in training process or Not. if equals None, will not do validation in train process; if equals positive integer, will validate data every validation_freqs epochs passes; if container object in python, will validate data if epochs belong to this container. e.g. validation_freqs = [10, 15], will validate data when epoch equals to 10 and 15. Default: None The default value is None, 1 is suggested. You can set it to a number larger than 1 in order to speed up training by skipping validation rounds. When it is larger than 1, a number which is divisible by "num_trees" is recommended, otherwise, you will miss the validation scores of last training iteration.

integer larger than 0

will stop training if one metric of one validation data doesn’t improve in last early_stopping_round rounds, need to set validation freqs and will check early_stopping every at every validation epoch,

list, default: []

Specify which metrics to be used when performing evaluation during training process. If set as empty, default metrics will be used. For regression tasks, default metrics are ['root_mean_squared_error', 'mean_absolute_error'], For binary-classificatiin tasks, default metrics are ['auc', 'ks']. For multi-classification tasks, default metrics are ['accuracy', 'precision', 'recall']

bool

use only the first metric for early stopping

bool

if use complete_secure, when use complete secure, build first tree using only guest features

Sparse_optimization

this parameter is abandoned in FATE-1.7.1

bool

activate Gradient-based One-Side Sampling, which selects large gradient and small gradient samples using top_rate and other_rate.

top_rate: float, the retain ratio of large gradient data, used when run_goss is True

other_rate: float, the retain ratio of small gradient data, used when run_goss is True

cipher_compress_error: This param is now abandoned

cipher_compress: bool, default is True, use cipher compressing to reduce computation cost and transfer cost

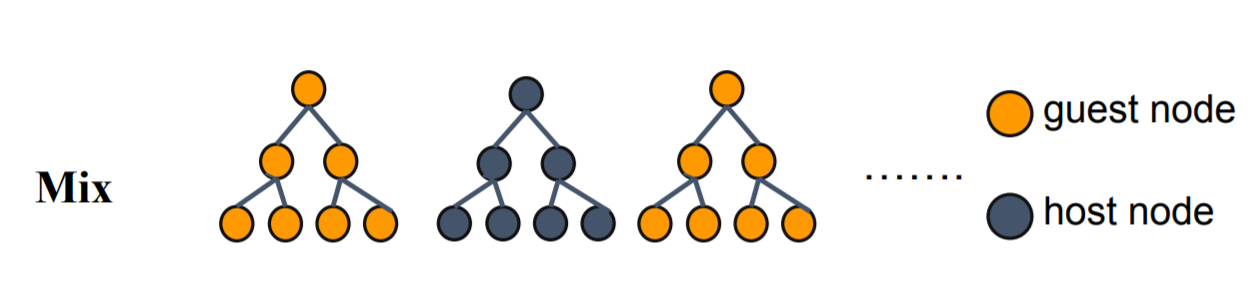

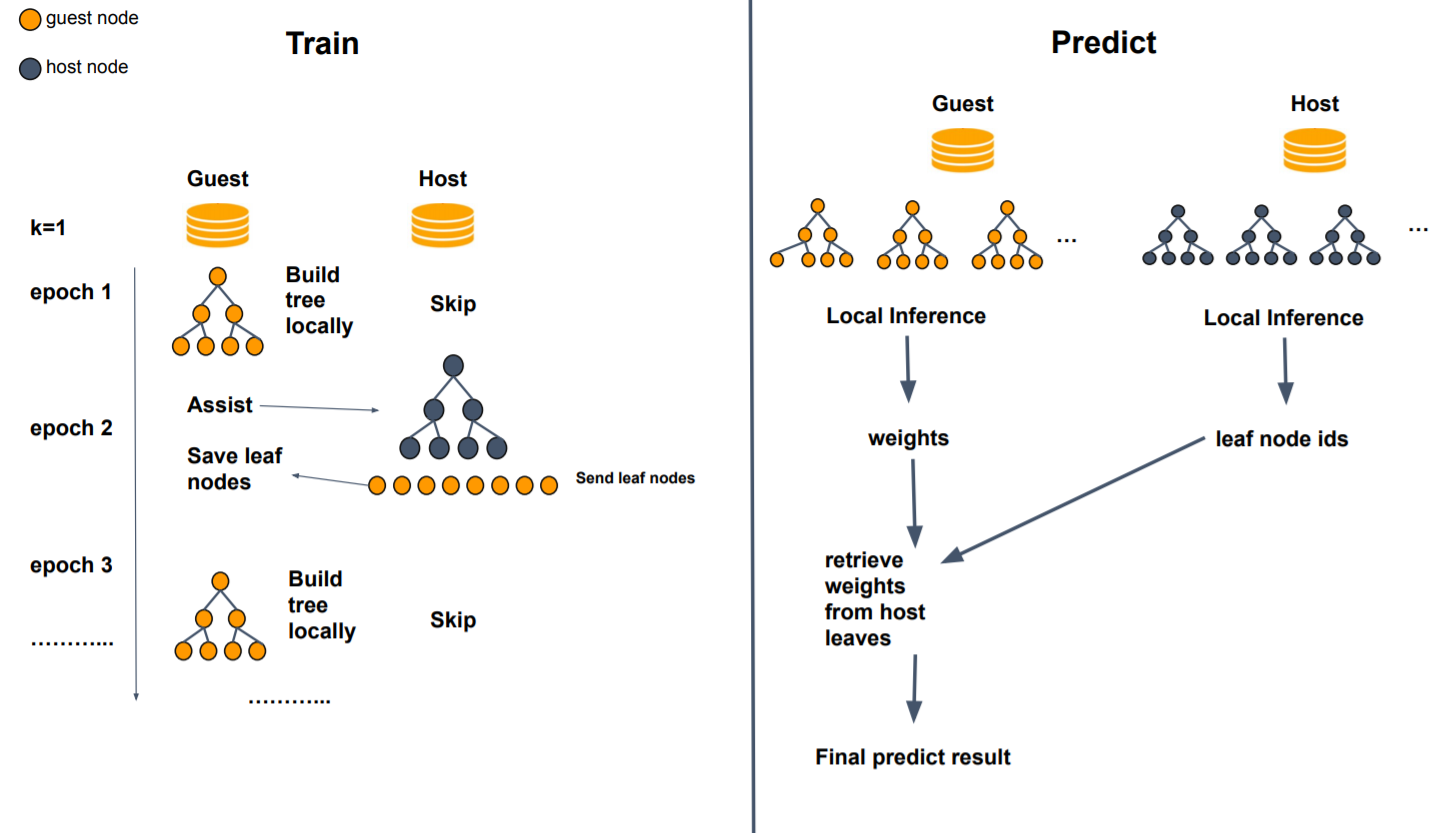

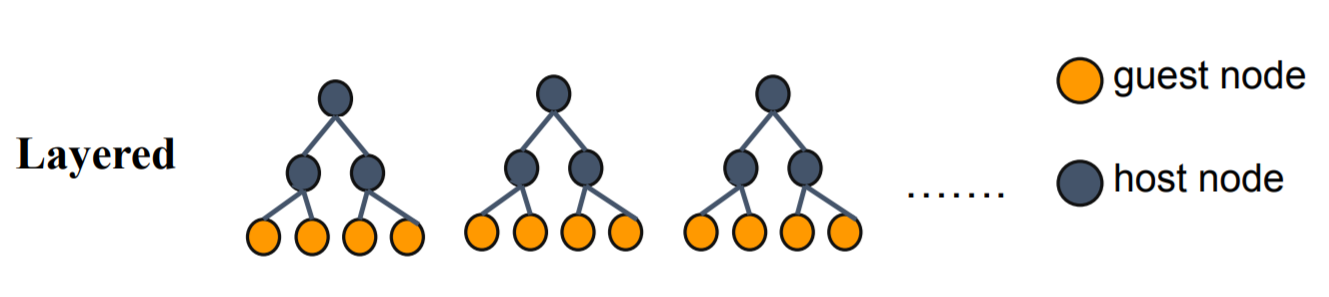

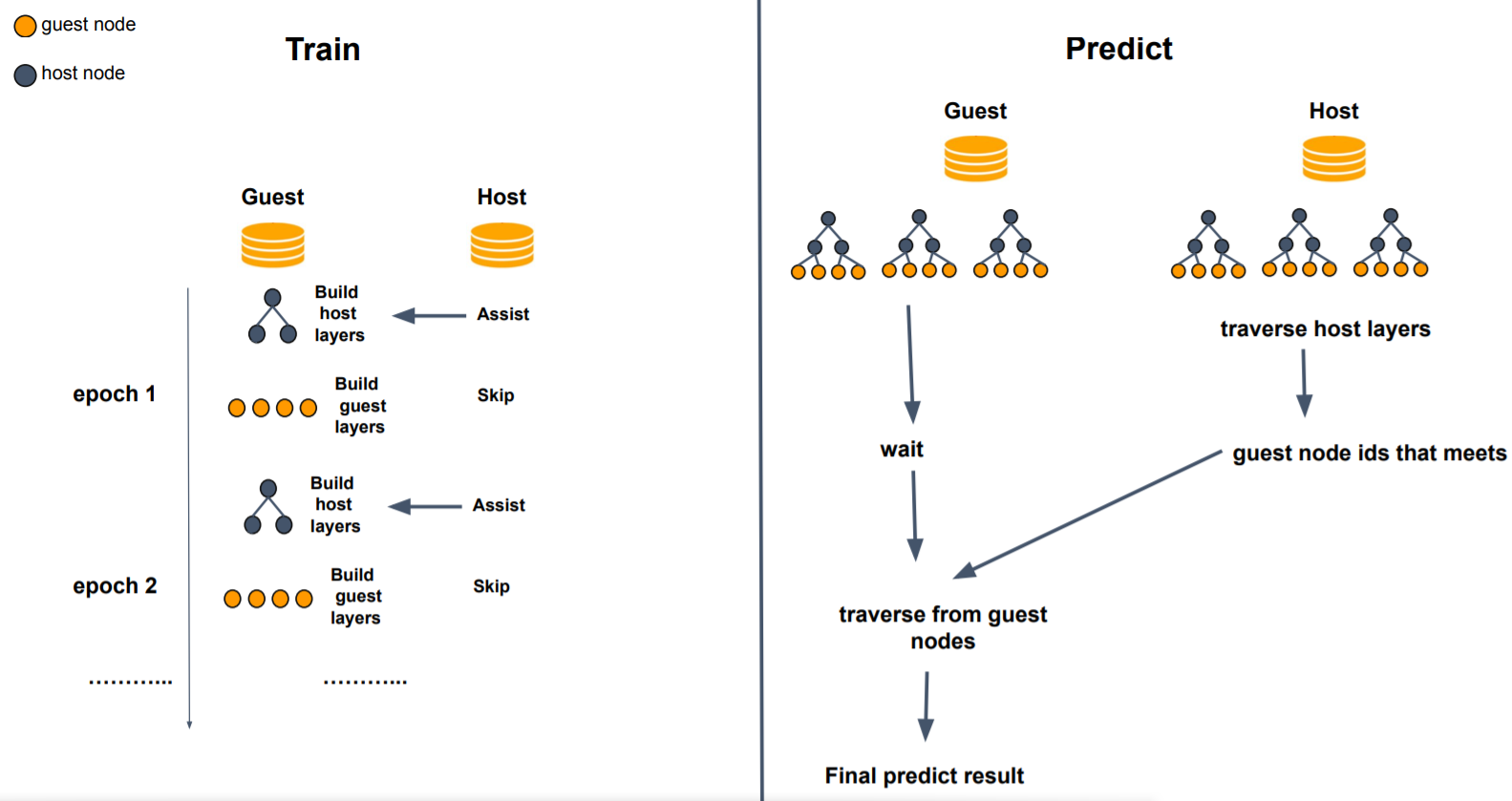

boosting_strategy:str

std: standard sbt setting

!!! mix "alternate using guest/host features to build trees. For example, the first 'tree_num_per_party' trees"

use guest features,

the second k trees use host features, and so on

!!! layered "only support 2 party, when running layered mode, first 'host_depth' layer will use host features,"

and then next 'guest_depth' will only use guest features

str

This parameter has the same function as boosting_strategy, but is deprecated

int, every party will alternate build 'tree_num_per_party' trees until reach max tree num, this

param is valid when boosting_strategy is mix

int, guest will build last guest_depth of a decision tree using guest features, is valid when boosting_strategy

is layered

int, host will build first host_depth of a decision tree using host features, is valid when work boosting_strategy

layered

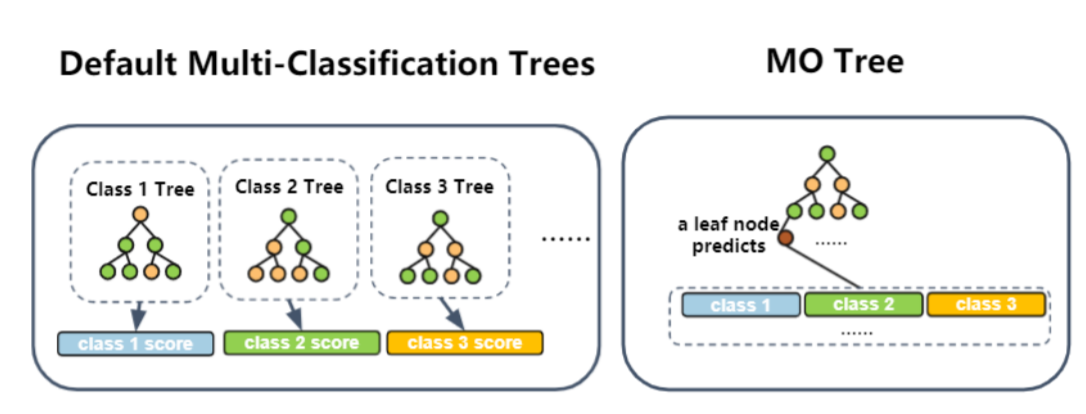

multi_mode: str, decide which mode to use when running multi-classification task:

single_output standard gbdt multi-classification strategy

multi_output every leaf give a multi-dimension predict, using multi_mode can save time

by learning a model with less trees.

bool

default is False, this option changes the inference algorithm used in predict tasks. a secure prediction method that hides decision path to enhance security in the inference step. This method is insprired by EINI inference algorithm.

bool

default is False multiply predict result by a random float number to confuse original predict result. This operation further enhances the security of naive EINI algorithm.

bool

default is False check the complexity of tree models when running EINI algorithms. Complexity models are easy to hide their decision path, while simple tree models are not, therefore if a tree model is too simple, it is not allowed to run EINI predict algorithms.

Source code in federatedml/param/boosting_param.py

class HeteroSecureBoostParam(HeteroBoostingParam):

"""

Define boosting tree parameters that used in federated ml.

Parameters

----------

task_type : {'classification', 'regression'}, default: 'classification'

task type

tree_param : DecisionTreeParam Object, default: DecisionTreeParam()

tree param

objective_param : ObjectiveParam Object, default: ObjectiveParam()

objective param

learning_rate : float, int or long

the learning rate of secure boost. default: 0.3

num_trees : int or float

the max number of trees to build. default: 5

subsample_feature_rate : float

a float-number in [0, 1], default: 1.0

random_seed: int

seed that controls all random functions

n_iter_no_change : bool,

when True and residual error less than tol, tree building process will stop. default: True

encrypt_param : EncodeParam Object

encrypt method use in secure boost, default: EncryptParam(), this parameter

is only for hetero-secureboost

bin_num: positive integer greater than 1

bin number use in quantile. default: 32

encrypted_mode_calculator_param: EncryptedModeCalculatorParam object

the calculation mode use in secureboost, default: EncryptedModeCalculatorParam(), only for hetero-secureboost

use_missing: bool

use missing value in training process or not. default: False

zero_as_missing: bool

regard 0 as missing value or not, will be use only if use_missing=True, default: False

validation_freqs: None or positive integer or container object in python

Do validation in training process or Not.

if equals None, will not do validation in train process;

if equals positive integer, will validate data every validation_freqs epochs passes;

if container object in python, will validate data if epochs belong to this container.

e.g. validation_freqs = [10, 15], will validate data when epoch equals to 10 and 15.

Default: None

The default value is None, 1 is suggested. You can set it to a number larger than 1 in order to

speed up training by skipping validation rounds. When it is larger than 1, a number which is

divisible by "num_trees" is recommended, otherwise, you will miss the validation scores

of last training iteration.

early_stopping_rounds: integer larger than 0

will stop training if one metric of one validation data

doesn’t improve in last early_stopping_round rounds,

need to set validation freqs and will check early_stopping every at every validation epoch,

metrics: list, default: []

Specify which metrics to be used when performing evaluation during training process.

If set as empty, default metrics will be used. For regression tasks, default metrics are

['root_mean_squared_error', 'mean_absolute_error'], For binary-classificatiin tasks, default metrics

are ['auc', 'ks']. For multi-classification tasks, default metrics are ['accuracy', 'precision', 'recall']

use_first_metric_only: bool

use only the first metric for early stopping

complete_secure: bool

if use complete_secure, when use complete secure, build first tree using only guest features

sparse_optimization:

this parameter is abandoned in FATE-1.7.1

run_goss: bool

activate Gradient-based One-Side Sampling, which selects large gradient and small

gradient samples using top_rate and other_rate.

top_rate: float, the retain ratio of large gradient data, used when run_goss is True

other_rate: float, the retain ratio of small gradient data, used when run_goss is True

cipher_compress_error: This param is now abandoned

cipher_compress: bool, default is True, use cipher compressing to reduce computation cost and transfer cost

boosting_strategy:str

std: standard sbt setting

mix: alternate using guest/host features to build trees. For example, the first 'tree_num_per_party' trees

use guest features,

the second k trees use host features, and so on

layered: only support 2 party, when running layered mode, first 'host_depth' layer will use host features,

and then next 'guest_depth' will only use guest features

work_mode: str

This parameter has the same function as boosting_strategy, but is deprecated

tree_num_per_party: int, every party will alternate build 'tree_num_per_party' trees until reach max tree num, this

param is valid when boosting_strategy is mix

guest_depth: int, guest will build last guest_depth of a decision tree using guest features, is valid when boosting_strategy

is layered

host_depth: int, host will build first host_depth of a decision tree using host features, is valid when work boosting_strategy

layered

multi_mode: str, decide which mode to use when running multi-classification task:

single_output standard gbdt multi-classification strategy

multi_output every leaf give a multi-dimension predict, using multi_mode can save time

by learning a model with less trees.

EINI_inference: bool

default is False, this option changes the inference algorithm used in predict tasks.

a secure prediction method that hides decision path to enhance security in the inference

step. This method is insprired by EINI inference algorithm.

EINI_random_mask: bool

default is False

multiply predict result by a random float number to confuse original predict result. This operation further

enhances the security of naive EINI algorithm.

EINI_complexity_check: bool

default is False

check the complexity of tree models when running EINI algorithms. Complexity models are easy to hide their

decision path, while simple tree models are not, therefore if a tree model is too simple, it is not allowed

to run EINI predict algorithms.

"""

def __init__(self, tree_param: DecisionTreeParam = DecisionTreeParam(), task_type=consts.CLASSIFICATION,

objective_param=ObjectiveParam(),

learning_rate=0.3, num_trees=5, subsample_feature_rate=1.0, n_iter_no_change=True,

tol=0.0001, encrypt_param=EncryptParam(),

bin_num=32,

encrypted_mode_calculator_param=EncryptedModeCalculatorParam(),

predict_param=PredictParam(), cv_param=CrossValidationParam(),

validation_freqs=None, early_stopping_rounds=None, use_missing=False, zero_as_missing=False,

complete_secure=False, metrics=None, use_first_metric_only=False, random_seed=100,

binning_error=consts.DEFAULT_RELATIVE_ERROR,

sparse_optimization=False, run_goss=False, top_rate=0.2, other_rate=0.1,

cipher_compress_error=None, cipher_compress=True, new_ver=True, boosting_strategy=consts.STD_TREE,

work_mode=None, tree_num_per_party=1, guest_depth=2, host_depth=3, callback_param=CallbackParam(),

multi_mode=consts.SINGLE_OUTPUT, EINI_inference=False, EINI_random_mask=False,

EINI_complexity_check=False):

super(HeteroSecureBoostParam, self).__init__(task_type, objective_param, learning_rate, num_trees,

subsample_feature_rate, n_iter_no_change, tol, encrypt_param,

bin_num, encrypted_mode_calculator_param, predict_param, cv_param,

validation_freqs, early_stopping_rounds, metrics=metrics,

use_first_metric_only=use_first_metric_only,

random_seed=random_seed,

binning_error=binning_error)

self.tree_param = copy.deepcopy(tree_param)

self.zero_as_missing = zero_as_missing

self.use_missing = use_missing

self.complete_secure = complete_secure

self.sparse_optimization = sparse_optimization

self.run_goss = run_goss

self.top_rate = top_rate

self.other_rate = other_rate

self.cipher_compress_error = cipher_compress_error

self.cipher_compress = cipher_compress

self.new_ver = new_ver

self.EINI_inference = EINI_inference

self.EINI_random_mask = EINI_random_mask

self.EINI_complexity_check = EINI_complexity_check

self.boosting_strategy = boosting_strategy

self.work_mode = work_mode

self.tree_num_per_party = tree_num_per_party

self.guest_depth = guest_depth

self.host_depth = host_depth

self.callback_param = copy.deepcopy(callback_param)

self.multi_mode = multi_mode

def check(self):

super(HeteroSecureBoostParam, self).check()

self.tree_param.check()

if not isinstance(self.use_missing, bool):

raise ValueError('use missing should be bool type')

if not isinstance(self.zero_as_missing, bool):

raise ValueError('zero as missing should be bool type')

self.check_boolean(self.complete_secure, 'complete_secure')

self.check_boolean(self.run_goss, 'run goss')

self.check_decimal_float(self.top_rate, 'top rate')

self.check_decimal_float(self.other_rate, 'other rate')

self.check_positive_number(self.other_rate, 'other_rate')

self.check_positive_number(self.top_rate, 'top_rate')

self.check_boolean(self.new_ver, 'code version switcher')

self.check_boolean(self.cipher_compress, 'cipher compress')

self.check_boolean(self.EINI_inference, 'eini inference')

self.check_boolean(self.EINI_random_mask, 'eini random mask')

self.check_boolean(self.EINI_complexity_check, 'eini complexity check')

if self.EINI_inference and self.EINI_random_mask:

LOGGER.warning('To protect the inference decision path, notice that current setting will multiply'

' predict result by a random number, hence SecureBoost will return confused predict scores'

' that is not the same as the original predict scores')

if self.work_mode == consts.MIX_TREE and self.EINI_inference:

LOGGER.warning('Mix tree mode does not support EINI, use default predict setting')

if self.work_mode is not None:

self.boosting_strategy = self.work_mode

if self.multi_mode not in [consts.SINGLE_OUTPUT, consts.MULTI_OUTPUT]:

raise ValueError('unsupported multi-classification mode')

if self.multi_mode == consts.MULTI_OUTPUT:

if self.boosting_strategy != consts.STD_TREE:

raise ValueError('MO trees only works when boosting strategy is std tree')

if not self.cipher_compress:

raise ValueError('Mo trees only works when cipher compress is enabled')

if self.boosting_strategy not in [consts.STD_TREE, consts.LAYERED_TREE, consts.MIX_TREE]:

raise ValueError('unknown sbt boosting strategy{}'.format(self.boosting_strategy))

for p in ["early_stopping_rounds", "validation_freqs", "metrics",

"use_first_metric_only"]:

# if self._warn_to_deprecate_param(p, "", ""):

if self._deprecated_params_set.get(p):

if "callback_param" in self.get_user_feeded():

raise ValueError(f"{p} and callback param should not be set simultaneously,"

f"{self._deprecated_params_set}, {self.get_user_feeded()}")

else:

self.callback_param.callbacks = ["PerformanceEvaluate"]

break

descr = "boosting_param's"

if self._warn_to_deprecate_param("validation_freqs", descr, "callback_param's 'validation_freqs'"):

self.callback_param.validation_freqs = self.validation_freqs

if self._warn_to_deprecate_param("early_stopping_rounds", descr, "callback_param's 'early_stopping_rounds'"):

self.callback_param.early_stopping_rounds = self.early_stopping_rounds

if self._warn_to_deprecate_param("metrics", descr, "callback_param's 'metrics'"):

self.callback_param.metrics = self.metrics

if self._warn_to_deprecate_param("use_first_metric_only", descr, "callback_param's 'use_first_metric_only'"):

self.callback_param.use_first_metric_only = self.use_first_metric_only

if self.top_rate + self.other_rate >= 1:

raise ValueError('sum of top rate and other rate should be smaller than 1')

return True

__init__(self, tree_param=<federatedml.param.boosting_param.DecisionTreeParam object at 0x7f93205aca90>, task_type='classification', objective_param=<federatedml.param.boosting_param.ObjectiveParam object at 0x7f93205acc10>, learning_rate=0.3, num_trees=5, subsample_feature_rate=1.0, n_iter_no_change=True, tol=0.0001, encrypt_param=<federatedml.param.encrypt_param.EncryptParam object at 0x7f93205acc90>, bin_num=32, encrypted_mode_calculator_param=<federatedml.param.encrypted_mode_calculation_param.EncryptedModeCalculatorParam object at 0x7f93205accd0>, predict_param=<federatedml.param.predict_param.PredictParam object at 0x7f93205acc50>, cv_param=<federatedml.param.cross_validation_param.CrossValidationParam object at 0x7f93205acb10>, validation_freqs=None, early_stopping_rounds=None, use_missing=False, zero_as_missing=False, complete_secure=False, metrics=None, use_first_metric_only=False, random_seed=100, binning_error=0.0001, sparse_optimization=False, run_goss=False, top_rate=0.2, other_rate=0.1, cipher_compress_error=None, cipher_compress=True, new_ver=True, boosting_strategy='std', work_mode=None, tree_num_per_party=1, guest_depth=2, host_depth=3, callback_param=<federatedml.param.callback_param.CallbackParam object at 0x7f93205acdd0>, multi_mode='single_output', EINI_inference=False, EINI_random_mask=False, EINI_complexity_check=False)

special

¶Source code in federatedml/param/boosting_param.py

def __init__(self, tree_param: DecisionTreeParam = DecisionTreeParam(), task_type=consts.CLASSIFICATION,

objective_param=ObjectiveParam(),

learning_rate=0.3, num_trees=5, subsample_feature_rate=1.0, n_iter_no_change=True,

tol=0.0001, encrypt_param=EncryptParam(),

bin_num=32,

encrypted_mode_calculator_param=EncryptedModeCalculatorParam(),

predict_param=PredictParam(), cv_param=CrossValidationParam(),

validation_freqs=None, early_stopping_rounds=None, use_missing=False, zero_as_missing=False,

complete_secure=False, metrics=None, use_first_metric_only=False, random_seed=100,

binning_error=consts.DEFAULT_RELATIVE_ERROR,

sparse_optimization=False, run_goss=False, top_rate=0.2, other_rate=0.1,

cipher_compress_error=None, cipher_compress=True, new_ver=True, boosting_strategy=consts.STD_TREE,

work_mode=None, tree_num_per_party=1, guest_depth=2, host_depth=3, callback_param=CallbackParam(),

multi_mode=consts.SINGLE_OUTPUT, EINI_inference=False, EINI_random_mask=False,

EINI_complexity_check=False):

super(HeteroSecureBoostParam, self).__init__(task_type, objective_param, learning_rate, num_trees,

subsample_feature_rate, n_iter_no_change, tol, encrypt_param,

bin_num, encrypted_mode_calculator_param, predict_param, cv_param,

validation_freqs, early_stopping_rounds, metrics=metrics,

use_first_metric_only=use_first_metric_only,

random_seed=random_seed,

binning_error=binning_error)

self.tree_param = copy.deepcopy(tree_param)

self.zero_as_missing = zero_as_missing

self.use_missing = use_missing

self.complete_secure = complete_secure

self.sparse_optimization = sparse_optimization

self.run_goss = run_goss

self.top_rate = top_rate

self.other_rate = other_rate

self.cipher_compress_error = cipher_compress_error

self.cipher_compress = cipher_compress

self.new_ver = new_ver

self.EINI_inference = EINI_inference

self.EINI_random_mask = EINI_random_mask

self.EINI_complexity_check = EINI_complexity_check

self.boosting_strategy = boosting_strategy

self.work_mode = work_mode

self.tree_num_per_party = tree_num_per_party

self.guest_depth = guest_depth

self.host_depth = host_depth

self.callback_param = copy.deepcopy(callback_param)

self.multi_mode = multi_mode

check(self)

¶Source code in federatedml/param/boosting_param.py

def check(self):

super(HeteroSecureBoostParam, self).check()

self.tree_param.check()

if not isinstance(self.use_missing, bool):

raise ValueError('use missing should be bool type')

if not isinstance(self.zero_as_missing, bool):

raise ValueError('zero as missing should be bool type')

self.check_boolean(self.complete_secure, 'complete_secure')

self.check_boolean(self.run_goss, 'run goss')

self.check_decimal_float(self.top_rate, 'top rate')

self.check_decimal_float(self.other_rate, 'other rate')

self.check_positive_number(self.other_rate, 'other_rate')

self.check_positive_number(self.top_rate, 'top_rate')

self.check_boolean(self.new_ver, 'code version switcher')

self.check_boolean(self.cipher_compress, 'cipher compress')

self.check_boolean(self.EINI_inference, 'eini inference')

self.check_boolean(self.EINI_random_mask, 'eini random mask')

self.check_boolean(self.EINI_complexity_check, 'eini complexity check')

if self.EINI_inference and self.EINI_random_mask:

LOGGER.warning('To protect the inference decision path, notice that current setting will multiply'

' predict result by a random number, hence SecureBoost will return confused predict scores'

' that is not the same as the original predict scores')

if self.work_mode == consts.MIX_TREE and self.EINI_inference:

LOGGER.warning('Mix tree mode does not support EINI, use default predict setting')

if self.work_mode is not None:

self.boosting_strategy = self.work_mode

if self.multi_mode not in [consts.SINGLE_OUTPUT, consts.MULTI_OUTPUT]:

raise ValueError('unsupported multi-classification mode')

if self.multi_mode == consts.MULTI_OUTPUT:

if self.boosting_strategy != consts.STD_TREE:

raise ValueError('MO trees only works when boosting strategy is std tree')

if not self.cipher_compress:

raise ValueError('Mo trees only works when cipher compress is enabled')

if self.boosting_strategy not in [consts.STD_TREE, consts.LAYERED_TREE, consts.MIX_TREE]:

raise ValueError('unknown sbt boosting strategy{}'.format(self.boosting_strategy))

for p in ["early_stopping_rounds", "validation_freqs", "metrics",

"use_first_metric_only"]:

# if self._warn_to_deprecate_param(p, "", ""):

if self._deprecated_params_set.get(p):

if "callback_param" in self.get_user_feeded():

raise ValueError(f"{p} and callback param should not be set simultaneously,"

f"{self._deprecated_params_set}, {self.get_user_feeded()}")

else:

self.callback_param.callbacks = ["PerformanceEvaluate"]

break

descr = "boosting_param's"

if self._warn_to_deprecate_param("validation_freqs", descr, "callback_param's 'validation_freqs'"):

self.callback_param.validation_freqs = self.validation_freqs

if self._warn_to_deprecate_param("early_stopping_rounds", descr, "callback_param's 'early_stopping_rounds'"):

self.callback_param.early_stopping_rounds = self.early_stopping_rounds

if self._warn_to_deprecate_param("metrics", descr, "callback_param's 'metrics'"):

self.callback_param.metrics = self.metrics

if self._warn_to_deprecate_param("use_first_metric_only", descr, "callback_param's 'use_first_metric_only'"):

self.callback_param.use_first_metric_only = self.use_first_metric_only

if self.top_rate + self.other_rate >= 1:

raise ValueError('sum of top rate and other rate should be smaller than 1')

return True

HomoSecureBoostParam (BoostingParam)

¶

Parameters¶

{'distributed', 'memory'}

decides which backend to use when computing histograms for homo-sbt

Source code in federatedml/param/boosting_param.py

class HomoSecureBoostParam(BoostingParam):

"""

Parameters

----------

backend: {'distributed', 'memory'}

decides which backend to use when computing histograms for homo-sbt

"""

def __init__(self, tree_param: DecisionTreeParam = DecisionTreeParam(), task_type=consts.CLASSIFICATION,

objective_param=ObjectiveParam(),

learning_rate=0.3, num_trees=5, subsample_feature_rate=1, n_iter_no_change=True,

tol=0.0001, bin_num=32, predict_param=PredictParam(), cv_param=CrossValidationParam(),

validation_freqs=None, use_missing=False, zero_as_missing=False, random_seed=100,

binning_error=consts.DEFAULT_RELATIVE_ERROR, backend=consts.DISTRIBUTED_BACKEND,

callback_param=CallbackParam(), multi_mode=consts.SINGLE_OUTPUT):

super(HomoSecureBoostParam, self).__init__(task_type=task_type,

objective_param=objective_param,

learning_rate=learning_rate,

num_trees=num_trees,

subsample_feature_rate=subsample_feature_rate,

n_iter_no_change=n_iter_no_change,

tol=tol,

bin_num=bin_num,

predict_param=predict_param,

cv_param=cv_param,

validation_freqs=validation_freqs,

random_seed=random_seed,

binning_error=binning_error

)

self.use_missing = use_missing

self.zero_as_missing = zero_as_missing

self.tree_param = copy.deepcopy(tree_param)

self.backend = backend

self.callback_param = copy.deepcopy(callback_param)

self.multi_mode = multi_mode

def check(self):

super(HomoSecureBoostParam, self).check()

self.tree_param.check()

if not isinstance(self.use_missing, bool):

raise ValueError('use missing should be bool type')

if not isinstance(self.zero_as_missing, bool):

raise ValueError('zero as missing should be bool type')

if self.backend not in [consts.MEMORY_BACKEND, consts.DISTRIBUTED_BACKEND]:

raise ValueError('unsupported backend')

if self.multi_mode not in [consts.SINGLE_OUTPUT, consts.MULTI_OUTPUT]:

raise ValueError('unsupported multi-classification mode')

for p in ["validation_freqs", "metrics"]:

# if self._warn_to_deprecate_param(p, "", ""):

if self._deprecated_params_set.get(p):

if "callback_param" in self.get_user_feeded():

raise ValueError(f"{p} and callback param should not be set simultaneously,"

f"{self._deprecated_params_set}, {self.get_user_feeded()}")

else:

self.callback_param.callbacks = ["PerformanceEvaluate"]

break

descr = "boosting_param's"

if self._warn_to_deprecate_param("validation_freqs", descr, "callback_param's 'validation_freqs'"):

self.callback_param.validation_freqs = self.validation_freqs

if self._warn_to_deprecate_param("metrics", descr, "callback_param's 'metrics'"):

self.callback_param.metrics = self.metrics

if self.multi_mode not in [consts.SINGLE_OUTPUT, consts.MULTI_OUTPUT]:

raise ValueError('unsupported multi-classification mode')

if self.multi_mode == consts.MULTI_OUTPUT:

if self.task_type == consts.REGRESSION:

raise ValueError('regression tasks not support multi-output trees')

return True

__init__(self, tree_param=<federatedml.param.boosting_param.DecisionTreeParam object at 0x7f93205ace10>, task_type='classification', objective_param=<federatedml.param.boosting_param.ObjectiveParam object at 0x7f93205aced0>, learning_rate=0.3, num_trees=5, subsample_feature_rate=1, n_iter_no_change=True, tol=0.0001, bin_num=32, predict_param=<federatedml.param.predict_param.PredictParam object at 0x7f93205acf50>, cv_param=<federatedml.param.cross_validation_param.CrossValidationParam object at 0x7f93205acf90>, validation_freqs=None, use_missing=False, zero_as_missing=False, random_seed=100, binning_error=0.0001, backend='distributed', callback_param=<federatedml.param.callback_param.CallbackParam object at 0x7f93205acd90>, multi_mode='single_output')

special

¶Source code in federatedml/param/boosting_param.py

def __init__(self, tree_param: DecisionTreeParam = DecisionTreeParam(), task_type=consts.CLASSIFICATION,

objective_param=ObjectiveParam(),

learning_rate=0.3, num_trees=5, subsample_feature_rate=1, n_iter_no_change=True,

tol=0.0001, bin_num=32, predict_param=PredictParam(), cv_param=CrossValidationParam(),

validation_freqs=None, use_missing=False, zero_as_missing=False, random_seed=100,

binning_error=consts.DEFAULT_RELATIVE_ERROR, backend=consts.DISTRIBUTED_BACKEND,

callback_param=CallbackParam(), multi_mode=consts.SINGLE_OUTPUT):

super(HomoSecureBoostParam, self).__init__(task_type=task_type,

objective_param=objective_param,

learning_rate=learning_rate,

num_trees=num_trees,

subsample_feature_rate=subsample_feature_rate,

n_iter_no_change=n_iter_no_change,

tol=tol,

bin_num=bin_num,

predict_param=predict_param,

cv_param=cv_param,

validation_freqs=validation_freqs,

random_seed=random_seed,

binning_error=binning_error

)

self.use_missing = use_missing

self.zero_as_missing = zero_as_missing

self.tree_param = copy.deepcopy(tree_param)

self.backend = backend

self.callback_param = copy.deepcopy(callback_param)

self.multi_mode = multi_mode

check(self)

¶Source code in federatedml/param/boosting_param.py

def check(self):

super(HomoSecureBoostParam, self).check()

self.tree_param.check()

if not isinstance(self.use_missing, bool):

raise ValueError('use missing should be bool type')

if not isinstance(self.zero_as_missing, bool):

raise ValueError('zero as missing should be bool type')

if self.backend not in [consts.MEMORY_BACKEND, consts.DISTRIBUTED_BACKEND]:

raise ValueError('unsupported backend')

if self.multi_mode not in [consts.SINGLE_OUTPUT, consts.MULTI_OUTPUT]:

raise ValueError('unsupported multi-classification mode')

for p in ["validation_freqs", "metrics"]:

# if self._warn_to_deprecate_param(p, "", ""):

if self._deprecated_params_set.get(p):

if "callback_param" in self.get_user_feeded():

raise ValueError(f"{p} and callback param should not be set simultaneously,"

f"{self._deprecated_params_set}, {self.get_user_feeded()}")

else:

self.callback_param.callbacks = ["PerformanceEvaluate"]

break

descr = "boosting_param's"

if self._warn_to_deprecate_param("validation_freqs", descr, "callback_param's 'validation_freqs'"):

self.callback_param.validation_freqs = self.validation_freqs

if self._warn_to_deprecate_param("metrics", descr, "callback_param's 'metrics'"):

self.callback_param.metrics = self.metrics

if self.multi_mode not in [consts.SINGLE_OUTPUT, consts.MULTI_OUTPUT]:

raise ValueError('unsupported multi-classification mode')

if self.multi_mode == consts.MULTI_OUTPUT:

if self.task_type == consts.REGRESSION:

raise ValueError('regression tasks not support multi-output trees')

return True

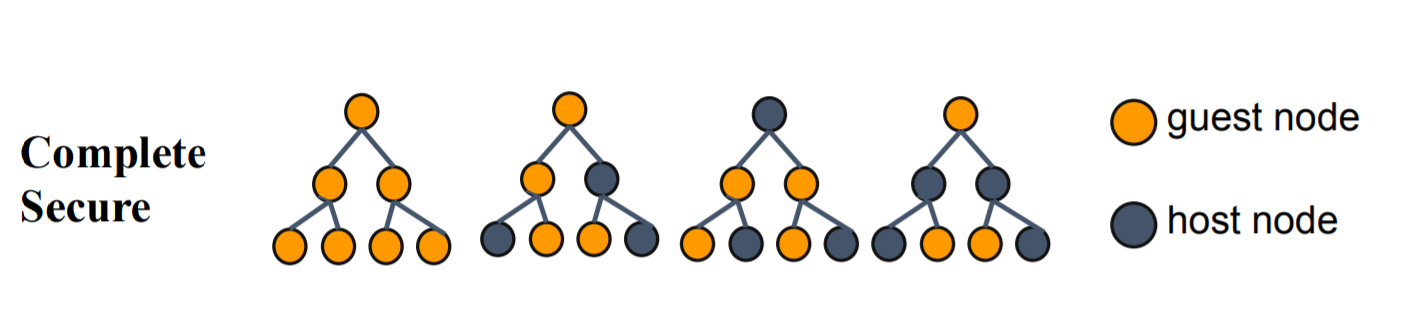

Hetero Complete Secureboost¶

Now Hetero SecureBoost adds a new option: complete_secure. Once enabled, the boosting model will only use guest features to build the first decision tree. This can avoid label leakages, accord to SecureBoost: A Lossless Federated Learning Framework.

Examples¶

Example

## Hetero SecureBoost Configuration Usage Guide.

#### Example Tasks.

1. Binary-Class:

example-data: (1) guest: breast_hetero_guest.csv (2) host: breast_hetero_host.csv

dsl: test_secureboost_train_dsl.json

runtime_config: test_secureboost_train_binary_conf.json

2. Multi-Class:

example-data: (1) guest: vehicle_scale_hetero_guest.csv

(2) host: vehicle_scale_hetero_host.csv

dsl: test_secureboost_train_dsl.json

runtime_config: test_secureboost_train_multi_conf.json

3. Regression:

example-data: (1) guest: student_hetero_guest.csv

(2) host: student_hetero_host.csv

dsl: test_secureboost_train_dsl.json

runtime_config: test_secureboost_train_regression_conf.json

4. Multi-Host Regression

example-data: (1) guest: motor_hetero_guest.csv

(2) host1: motor_hetero_host_1.csv;

(3) host2: motor_hetero_host_2.csv

dsl: test_secureboost_train_dsl.json

runtime_config: test_secureboost_train_regression_multi_host_conf.json

5. Binary-Class With Missing Value

example-data: (1) guest: ionosphere_scale_hetero_guest.csv

(2) host: ionosphere_scale_hetero_host.csv

dsl: test_secureboost_train_dsl.json

runtime_config: test_secureboost_train_binary_with_missing_value_conf.json

This example also contains another two feature since FATE-1.1.

(1) evaluate data during training process, check the "validation_freqs" field in runtime_config

6. Early stopping example

example-data: (1) guest: student_hetero_guest.csv

(2) host: student_hetero_host.csv

dsl: test_secureboost_train_dsl.json

runtime_config: test_secureboost_train_with_early_stopping_conf.json

#### Cross Validation Class

1. Binary-Class:

example-data: (1) guest: breast_hetero_guest.csv

(2) host: breast_hetero_guest.csv

dsl: test_secureboost_cross_validation_dsl.json

runtime_config: test_secureboost_cross_validation_binary_conf.json

2. Multi-Class:

example-data: (1) guest: vehicle_scale_hetero_guest.csv

(2) host: vehicle_scal_a.csv

dsl: test_secureboost_cross_validation_binary_conf.json

runtime_config: test_secureboost_cross_validation_multi_conf.json

3. Regression:

example-data: (1) guest: student_hetero_guest.csv

(2) host: student_hetero_host.csv

dsl: test_secureboost_cross_validation_dsl.json

runtime_config: test_secureboost_cross_validation_regression_conf.json

Users can use following commands to run a task.

flow job submit -c ${runtime_config} -d ${dsl}

Moreover, after successfully running the training task, you can use it to predict too.

test_secureboost_train_complete_secure_conf.json

{

"dsl_version": 2,

"initiator": {

"role": "guest",

"party_id": 9999

},

"role": {

"host": [

9998

],

"guest": [

9999

]

},

"component_parameters": {

"common": {

"hetero_secure_boost_0": {

"task_type": "classification",

"objective_param": {

"objective": "cross_entropy"

},

"num_trees": 3,

"validation_freqs": 1,

"encrypt_param": {

"method": "Paillier"

},

"tree_param": {

"max_depth": 3

},

"complete_secure": true

},

"evaluation_0": {

"eval_type": "binary"

}

},

"role": {

"guest": {

"0": {

"data_transform_0": {

"with_label": true,

"output_format": "dense"

},

"data_transform_1": {

"with_label": true,

"output_format": "dense"

},